AI Advertising Laws Around the World: What Brands Need to Know in 2026

Passionate content and search marketer aiming to bring great products front and center. When not hunched over my keyboard, you will find me in a city running a race, cycling or simply enjoying my life with a book in hand.

Your country-by-country compliance map for AI-generated ad creative in 2026.

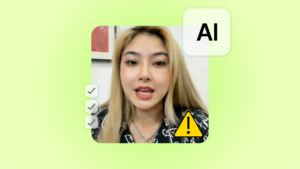

If you’re running AI-generated ads on Meta, TikTok, or YouTube, your compliance exposure doesn’t stop at the US or EU border. At least seven additional markets now have enforceable or near-enforceable AI advertising laws, and the approaches vary dramatically by region.

South Korea mandates labeling on every AI-generated ad. China requires both visible labels and embedded metadata. India makes platforms liable for unlabeled synthetic content. Canada and Australia are applying existing consumer protection frameworks to AI advertising with growing enforcement activity.

We’ve already covered the US and EU regulations in separate posts. This one picks up where those left off: seven more jurisdictions, country by country, covering what the law says, who’s liable, what the penalties look like, and whether human creator content sidesteps the rules.

TL;DR:

| Country | What triggers it | Who’s liable | Penalty | Human UGC avoids it? | Status |

|---|---|---|---|---|---|

| Canada | AI-generated/altered ad content without #MadeWithAI or #AIcreated disclosure | Brand, influencer | Self-regulatory (Ad Standards) + Competition Act backstop: up to C$10M corporate first offence | Yes, real creators need only #Ad | Live (Oct 2025 guidelines) |

| Australia | Misleading/deceptive AI ad claims; AI-washing | Brand, advertiser | Up to A$100M (from Mar 2026) or 3x benefit/30% turnover | Yes, authentic content not misleading | Live (existing ACL) |

| South Korea | AI-generated ad without label; virtual human without disclosure | Creator, brand, platform | 5x damages; fines up to ₩1B for platforms | Yes, real humans not covered | Live (Jan 2026) |

| China | AI content without explicit + implicit labels | AI provider, platform, content publisher | RMB 10K-100K per violation; suspension | Yes, human content has no labeling obligation | Live (Sep 2025) |

| India | Synthetically generated content without user declaration + platform label | Platform (intermediary), user who posted | Loss of safe harbour; IT Act penalties up to ₹25 lakh + imprisonment | Yes, no SGI obligations for real content | Live (Feb 2026) |

| Brazil | High-risk AI without transparency (pending) | Developer, deployer | Up to R$50M or 2% revenue (proposed) | Likely yes (pending final text) | Pending (bill in Chamber) |

| Japan | Customer-facing AI that doesn’t self-identify | AI operator | None, no fines in current law | N/A, voluntary framework | Live (Jun 2025, non-binding) |

Canada: The “Self-Regulation” Model

Canada doesn’t have federal AI advertising laws. The previously proposed Artificial Intelligence and Data Act (AIDA) died on the order paper in January 2025 when Parliament was prorogued. New AI legislation may still surface in 2026, but as of May nothing has been tabled.

What Canada does have is a well-developed industry self-regulation system, backed by a statutory fallback that carries real penalties.

What The Ad Standards Guidelines Require

In October 2025, Ad Standards Canada released updated Influencer Marketing Disclosure Guidelines that specifically address AI-generated content and AI-created influencers. The guidelines are straightforward: if ad content is generated or significantly altered by AI, that fact must be disclosed. The recommended hashtags are #MadeWithAI or #AIcreated.

AI-generated influencers get their own set of rules. They must follow the same material connection disclosure requirements as human influencers, with best practice being a clear label like #VirtualInfluencer or #AIinfluencer. The Canadian Code of Advertising Standards also imposes a practical limitation: AI-generated influencers shouldn’t provide testimonials about product qualities they can’t experience, like smell, taste, or physical sensation. Posts created using AI filters or tools must remain truthful about the product’s actual benefits and attributes.

For paid partnership disclosure, #Ad used on its own (not combined with other words like #Advert or #Sponsored) remains the “gold standard” hashtag, with #Gifted and #InvitedGuest now recognized as acceptable alternatives for those specific scenarios.

The Competition Act Backstop

Ad Standards is industry self-regulation, not government enforcement. Compliance depends on member commitments and a complaint resolution process rather than statutory penalties. That distinction matters if you’re thinking about how much weight these guidelines carry.

However, the Competition Act provides a statutory backstop with real teeth. False or misleading advertising is illegal under the Act, and penalties scale quickly: individuals face fines up to C$750,000 on a first offence and C$1 million on subsequent violations. Corporations face up to C$10 million on a first offence and C$15 million on subsequent ones.

The Competition Bureau’s 2026-27 Annual Plan explicitly lists AI as an enforcement focus alongside deceptive marketing practices. So while the AI-specific disclosure guidelines are voluntary, the underlying prohibition on misleading advertising is not.

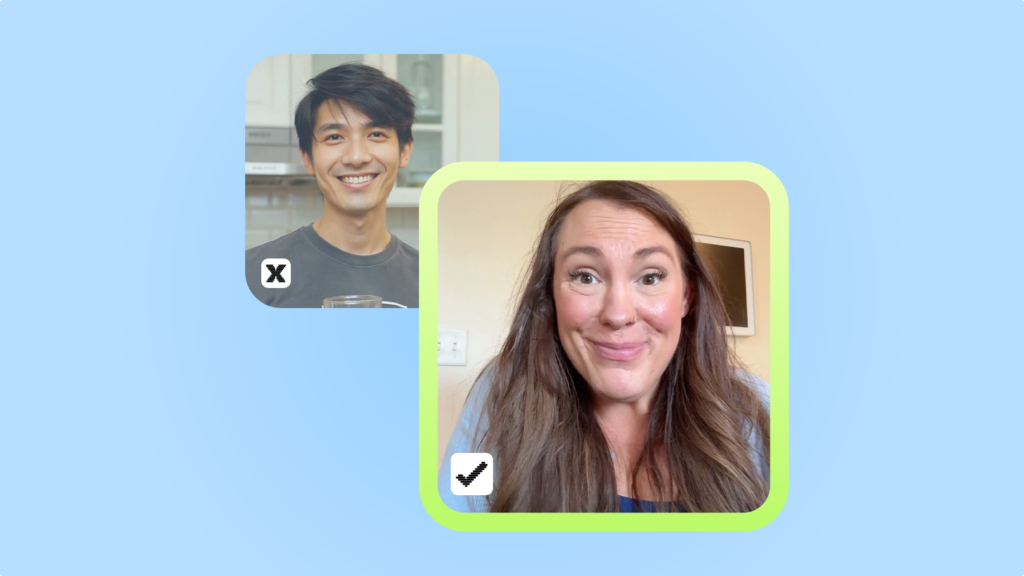

The human UGC angle: Real creators filmed on camera need only disclose the paid partnership with #Ad. No #MadeWithAI or #AIcreated disclosure is required. And unlike AI-generated influencers, real creators face no restrictions on testifying about product qualities, because they actually experienced the product.

Australia: The “Consumer Law” Approach

Australia has taken a different path from most countries on this list. Rather than writing new AI-specific legislation, the government reviewed its existing consumer protection framework and concluded it already covers AI. The Australian Treasury’s October 2025 final report on AI and the Australian Consumer Law (ACL) found that the existing principles-based protections are “generally well-suited” for AI-enabled goods and services. The core finding: the ACL’s prohibition on misleading or deceptive conduct applies regardless of the technology used to create the content, so AI-generated advertising is already in scope.

ACCC Enforcement: From Warnings to Fines

The practical question for brands isn’t whether the law covers AI advertising (it does), but whether regulators are actually enforcing it. And on that front, the answer shifted decisively in 2026.

The ACCC’s 2025–26 and 2026–27 compliance priorities explicitly target deceptive digital conduct, including influencer marketing, online reviews, AI-generated fake content, and AI-washing. The regulator has been vocal about AI-washing specifically: businesses must make accurate claims that genuinely reflect the level of AI use in their products, and can’t hide that manual processes run behind ostensibly “AI-powered” services.

March 2026 marked the transition from warnings to enforcement. The ACCC issued its first influencer-related financial penalty (PhotobookShop, fined A$39,600 for undisclosed influencer promotions) and commenced Federal Court proceedings against Microsoft for allegedly misleading and deceptive conduct affecting approximately 2.7 million customers. The fines are small so far, but the direction is clear.

Penalty Framework

The financial exposure for non-compliance grew significantly in March 2026. Maximum ACL penalties for corporations now reach the greater of A$100 million (up from A$50 million), three times the benefit attributable to the contravention, or 30% of adjusted turnover during the relevant period. Those numbers put Australian consumer law penalties in the same league as the EU AI Act for large companies.

Two other developments are worth flagging: ACMA now mandates disclosure when a synthetic AI voice hosts a radio program or news broadcast (February 2026), and Privacy Act amendments taking effect December 10, 2026 will require disclosure when automated systems use personal data to make decisions that significantly affect individuals.

The human UGC angle: Content filmed by real creators doesn’t raise misleading conduct concerns under the ACL (assuming the content itself is truthful). There’s no AI-washing risk when the content is authentic, no synthetic voice disclosure requirement, and the only obligation is standard #Ad disclosure for paid partnerships.

South Korea: The “Ad Labeling Rule” (effective January 2026)

South Korea has the most directly advertising-targeted AI regulation on this list. Starting in January 2026, mandatory AI labeling applies to all AI-generated or AI-assisted digital advertisements. Anyone who creates, edits, or posts AI-generated photos or videos must label them as AI-made, and users are prohibited from removing or tampering with those labels once they are affixed.

The rules are especially strict around endorsements. The Korea Fair Trade Commission and the Ministry of Food and Drug Safety have clarified that ads featuring AI-generated “virtual humans” recommending products must disclose that the endorser is not real, or the ad will be classified as unfair advertising. AI-generated doctors or experts recommending food or pharmaceutical products are treated as outright deceptive. Platform operators are also responsible for ensuring advertiser compliance, including providing labeling tools and notifying users of their obligations.

Penalties are steep. Punitive damages can reach five times the actual losses for knowingly distributing false or fabricated information online. Media outlets face fines up to ₩1 billion (~$684,000) for repeated distribution of confirmed false or manipulated content. Enforcement is fast, with 24-hour review cycles and an emergency process that can block harmful ads before a full review is complete.

The human UGC angle: Real human creators in ads are not covered by the labeling mandate. No “AI-made” label is required, no virtual human disclosure is needed, and standard paid partnership disclosure is the only obligation.

China: The “Dual-Label Rule” (effective September 2025)

China’s approach is the most technically prescriptive on this list. The Administrative Measures for the Labeling of AI-Generated Content took effect on September 1, 2025, issued by the Cyberspace Administration of China alongside a mandatory national standard.

The system requires two layers of labeling. Explicit labels are visible to users: text, icons, or audio cues clearly stating “AI-generated.” For images, the label text must be at least 5% of the shortest side of the image. Audio labels can use spoken indicators or even a Morse code rhythm encoding “AI.” Implicit labels are metadata embedded in the file itself, recording content attributes, the provider’s name, and a content identifier.

This dual requirement sits on top of China’s earlier Deep Synthesis Provisions (effective January 2023, which already required watermarking deep-synthesis outputs) and the amended Cybersecurity Law (effective January 2026, which adds a dedicated AI compliance provision covering ethics, risk monitoring, and safety assessment). The penalty structure for non-labeling includes warnings, public notices, rectification orders, possible suspension of information updates, and fines of RMB 10,000-100,000 (~$1,400-$14,000).

The human UGC angle: Content created by real humans carries no labeling obligation under the AI content measures. No explicit or implicit labels required.

India: The “Platform Liability Rule” (effective February 2026)

India’s IT (Intermediary Guidelines) Amendment Rules 2026 took effect on February 20, 2026. The rules target “synthetically generated information” (SGI), which covers any computer-generated or altered audio, visual, or audiovisual material that appears authentic.

The compliance burden falls primarily on platforms. Significant Social Media Intermediaries must require users to declare whether content is synthetically generated before publication, verify those declarations through automated tools, and label SGI appropriately. Labels must be “continuous and clearly visible throughout the duration of the content” for visual formats, with audio disclosures required for audio SGI. Where feasible, platforms must also embed permanent metadata or unique identifiers to trace the source. In April 2026, MeitY tightened the rules further, mandating that labels cannot be temporary or removable.

The enforcement mechanism is indirect but powerful. Non-compliant intermediaries lose their safe harbour protection from liability for third-party content, which then exposes them to existing IT Act penalties of up to ₹25 lakh (~$30,000) and potential imprisonment. The rules also introduce a three-hour takedown deadline for government or court-ordered content removal, a 92% reduction from the previous 36-hour window.

The human UGC angle: Content created by real humans isn’t “synthetically generated information.” No declaration, labeling, or metadata obligations apply to authentic creator content.

Brazil: The “Pending Legislation” Wildcard

Brazil’s AI landscape is defined by a bill that’s close to becoming law but hasn’t crossed the finish line yet. PL 2338/2023 was approved by the Senate on December 10, 2024 and remitted to the Chamber of Deputies on March 17, 2025. As of May 2026, it remains under review.

The bill adopts an EU AI Act-inspired risk-based classification system, following the same tiered framework of prohibited, high-risk, and lower-risk AI systems. Proposed penalties are significant: up to R$50 million (~$8.5M) per violation or 2% of company revenue in Brazil, whichever is higher, alongside warnings, public disclosure of infractions, and suspension of AI system operations.

While the main AI bill is pending, Brazil’s ECA Digital (enacted April 2026) has already introduced platform obligations for protecting minors from harmful digital content, including AI-generated material. This signals that even without a comprehensive AI law, sector-specific rules are moving forward.

The human UGC angle: No AI-specific advertising law is currently enforceable in Brazil. When PL 2338 passes, human-created content will sit outside the AI transparency and disclosure obligations.

Japan: The “Soft Law” Exception

Japan sits at the opposite end of the regulatory spectrum from South Korea and China. The AI Promotion Act (effective June 2025) is the most permissive AI law passed by any major economy: no fines, no banned applications, no pre-launch approvals. Its 2026 Transparency Guidelines require customer-facing AI to identify itself as “non-human,” but these are non-binding. The broader approach relies on voluntary industry codes coordinated through the Hiroshima AI Process, which agreed on a 2026 Action Plan pushing watermarking and provenance standards globally. Singapore takes a similar voluntary approach through its Model AI Governance Framework, with no binding ad rules or penalties.

The human UGC angle: Japan imposes no compliance overhead on AI-generated ad content, let alone human content. The global trend suggests this will tighten over time, but for now there’s nothing to comply with beyond standard advertising rules.

The Compliance Pattern Across Markets

Three regulatory tiers have emerged, and understanding which tier a market falls into shapes how you build your compliance program.

Hard law: South Korea (mandatory ad labeling with 5x punitive damages), China (dual-label system with fines and suspension), and India (platform liability with safe harbour loss). If you’re running AI-generated ads in these markets, labeling isn’t optional.

Consumer law plus self-regulation: Canada (Ad Standards voluntary guidelines backed by Competition Act penalties up to C$10M) and Australia (ACL misleading conduct prohibition with penalties up to A$100M). No AI-specific statutes, but existing frameworks apply and regulators are actively enforcing. Don’t mistake the lack of a dedicated AI law for a lack of enforcement risk.

Soft law: Japan (voluntary framework, no penalties) and Brazil (comprehensive bill pending). Building compliance now positions you ahead of wherever these markets land next.

Every jurisdiction on this list is moving toward requiring disclosure of AI-generated content in advertising. The only variables are speed and enforcement mechanism. Brands that build compliance around the strictest current standards position themselves for whatever comes next in every other market.

The bigger picture

Every country on this list is converging on the same expectation: if your ad creative is AI-generated, disclose it. The mechanisms differ, but the direction is uniform. And it’s accelerating.

For brands running AI-generated creative across multiple markets, the compliance surface is getting wider with each new rule. For brands working with real human creators, it stays the same everywhere: one disclosure (#Ad), no AI-specific obligations, no labeling workflows to manage by jurisdiction.

Here’s a full UGC AI compliance playbook that walks through the practical steps: how to audit your creative pipeline, build labeling workflows for hard-law markets, update creator briefs, and decide where human UGC simplifies your operations. That post is coming next.

Looking for proven Human creators and AI analytics – check out Billo CreativeOps

FAQs

Which countries have mandatory AI ad labeling right now?

South Korea (January 2026), China (September 2025), and India (February 2026). The EU’s Article 50 takes effect August 2, 2026, and multiple US state laws are live or imminent.

Do these laws apply to brands based outside the country?

Generally, yes. The practical test: if your ad is served to consumers in that country, assume the local rules apply.

What’s the biggest penalty a brand could face globally?

The EU AI Act tops the list at €15 million or 3% of global turnover. Australia’s ACL can reach A$100 million. In the US, FTC penalties hit $51,744 per violation with no cap on total.

Does using human UGC creators avoid all of these regulations?

Yes, for the AI-specific layer. Real creators on camera don’t trigger AI labeling, metadata, or synthetic content rules in any jurisdiction covered here. Standard #Ad disclosure still applies, but the entire AI compliance burden disappears.

SEO Lead

Passionate content and search marketer aiming to bring great products front and center. When not hunched over my keyboard, you will find me in a city running a race, cycling or simply enjoying my life with a book in hand.

Authentic creator videos, powered by real performance data

22,000+ brands use Billo to turn UGC into high-ROAS video ads.

UGC AI Compliance Guide: Prepare Your Ad Creativ...

Three regulatory deadlines are converging in summer 2026, and they [...]...

Read full articleThe EU AI Act: What the August 2026 Deadline Mea...

A compliance guide for brands and agencies running AI-generated ad [...]...

Read full articleThe US AI Regulations: What Brands Need to Know ...

A guide for DTC brands, agencies, and performance teams running [...]...

Read full article