UGC AI Compliance Guide: Prepare Your Ad Creative for 2026’s AI Regulations

Passionate content and search marketer aiming to bring great products front and center. When not hunched over my keyboard, you will find me in a city running a race, cycling or simply enjoying my life with a book in hand.

Three regulatory deadlines are converging in summer 2026, and they all target the same thing: AI-generated people in advertising. New York’s Synthetic Performer Disclosure Law takes effect June 9. The EU AI Act’s Article 50 transparency provisions become enforceable on August 2. California’s AI metadata mandate kicks in the same day.

For the first time, brands running user-generated content (UGC) and paid social campaigns face enforceable UGC AI compliance requirements across multiple jurisdictions at once. The core rule across all of them: if your ad creative uses AI to generate or substantially modify a human likeness (face, voice, body), disclosure is required. If AI is used for routine production tasks like color grading, noise removal, or copy assistance, it is not.

We have already covered the regulatory landscape in detail: what each US jurisdiction requires, how the EU AI Act works, and what the rules look like across eight additional global markets. This guide focuses on what you should actually do about them.

TL;DR

- Penalties range from $5,000 per violation in New York to €15 million or 3% of global turnover in the EU.

- AI video ads cost ~$3 per video vs. $150 to $400 for human UGC, but the compliance overhead on every AI asset (labels, metadata, platform toggles, audit trail) narrows that gap significantly.

- Human UGC wins on attention (2.4% CTR vs 1.9% for AI in a $100K test) and trust (37-point gap between how advertisers think audiences feel about AI ads and how they actually feel).

- If your creator contracts do not explicitly address AI modification of footage, you have a gap that needs closing before Q3.

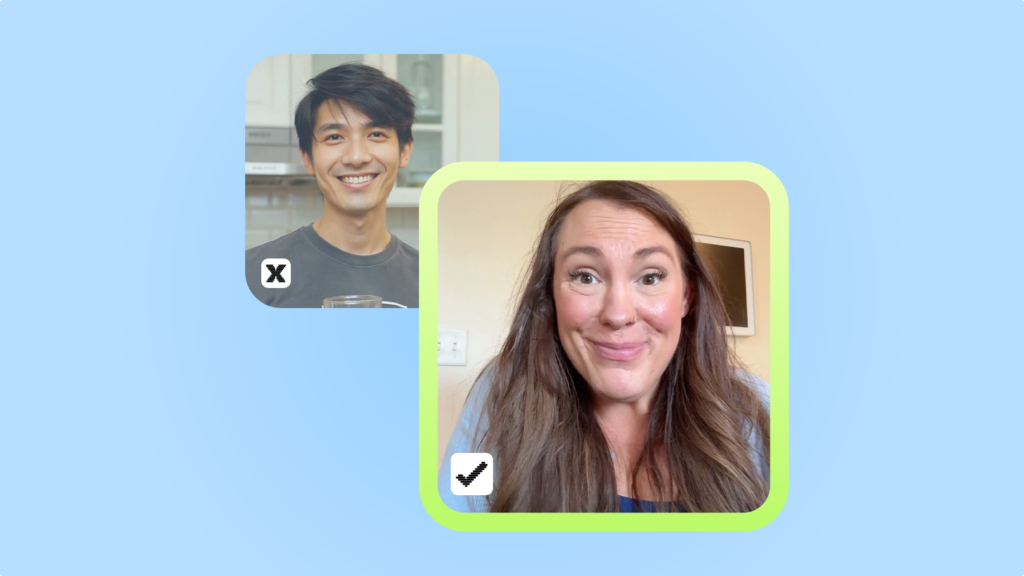

- Human creator content falls outside every AI disclosure definition across all jurisdictions: real people on camera are not synthetic performers.

The Transparency Trigger: When AI Use Requires Disclosure (and When It Doesn’t)

The biggest source of confusion in AI advertising compliance is figuring out which AI uses actually require a label and which ones do not. Disclosure is triggered by materiality, specifically whether AI use could mislead consumers about the content’s authenticity or a person’s identity.

That principle gives brands a practical decision tool. We call it the Transparency Trigger.

What triggers disclosure: synthetic humans, AI voice cloning, digital twins, AI-generated testimonials

If your ad creative contains an AI-generated or substantially modified human likeness, disclosure is required. This is consistent across New York’s Synthetic Performer Disclosure Law, the EU AI Act’s Article 50, and all three major ad platforms. The definitions vary slightly, but they all converge on the same trigger: AI-generated avatars, digital spokespeople, synthetic voices, and simulated extras in video ads all require disclosure.

Liability falls on whoever produces or creates the advertisement, meaning the brand, the agency, or the production company. Platforms are exempt. Our posts on US, EU, and global regulations cover each jurisdiction’s specific definitions, penalties, and timelines in full.

What doesn’t trigger disclosure: color grading, noise removal, background blur, AI copywriting assist, optimization algorithms

Not every use of AI in your creative workflow triggers a compliance obligation. The IAB framework explicitly exempts routine production tasks from its disclosure requirements. AI-assisted editing such as color grading, noise removal, and background blur falls squarely outside the scope. So do optimization algorithms for bid management and audience targeting, AI-powered copywriting assistance, and background production workflows like automated transcription or format conversion.

New York’s law carves out similar exemptions for audio-only ads and AI used solely for translation, and does not apply to real human creators filmed on camera. The practical takeaway is straightforward: if your AI tool changes what the viewer sees in a way that could create a false impression about a real person or event, you need to disclose. If it makes the production process more efficient without altering what the viewer perceives as real, you do not.

The IAB Materiality Test

The IAB framework provides the clearest decision-making tool available. It asks one question: “Does this AI use materially affect authenticity, identity, or representation in ways that could mislead consumers?” If the answer is yes, disclose. If no, move on.

The framework introduces a two-layer compliance model. The first layer is consumer-facing: standardized text labels, badges, icons, or watermarks placed near the ad creative so viewers can see that AI was involved. The second layer is machine-readable: C2PA metadata embedded in the file itself, which supports technical compliance and allows platforms to detect and label AI content automatically.

For your creative team, the workflow looks like this: before any asset goes into production, ask whether AI will generate or substantially modify a human likeness. If yes, plan for both the consumer-facing label and the metadata layer from the start. If no, proceed normally. This two-step check takes less than a minute per asset and eliminates most compliance guesswork before it becomes a problem.

The Gray Areas

The obvious ends of the spectrum are clear. The following scenarios cover the middle ground where most production teams actually get stuck.

1 You use AI to remove and replace the background behind your creator in a product demo video.

The creator’s face, voice, and body are unchanged. The background is now a clean studio setting instead of a messy kitchen. This does not trigger disclosure. The AI modification affects the environment, not the human likeness. The creator is still authentically themselves.

2 You take a creator’s 60-second testimonial and use an AI tool to generate five hook variations that splice, rearrange, or synthesize the creator’s voice to say opening lines they never actually recorded.

This triggers disclosure. Even though the original voice is real, AI is generating new speech that the creator never said. Under New York’s law, this creates a synthetic performance. Under the EU AI Act, it produces content that could “falsely appear to a person to be authentic.” Every hook variation needs a label.

3 You use AI-generated 3D product renders in a b-roll section while a real creator appears on camera in other parts of the same ad.

The product renders do not trigger disclosure because they contain no human likeness. The creator segments are real footage. As long as AI is not generating or modifying the human elements of the ad, the combined piece does not require synthetic content disclosure.

4 You run your creator’s video through CapCut’s AI auto-captions feature, which adds stylized animated text overlays.

This does not trigger disclosure. AI-generated captions, text overlays, and script assistance are explicitly exempt on every major platform. YouTube’s policy confirms that AI used for productivity tasks like automatic captions does not require the synthetic content label. The same applies to AI-written ad copy, hashtag suggestions, and script drafts.

5 You use an AI beauty filter that smooths skin, adjusts lighting on the creator’s face, and subtly reshapes facial features.

This is the hardest call. Light touch-up (smoothing, lighting) is comparable to standard post-production and likely falls below the materiality threshold. But if the filter substantially changes the creator’s appearance to the point where it could mislead viewers about what the person actually looks like, it starts to cross the line. The safest approach: if you would not feel comfortable telling the creator “we didn’t change how you look,” the modification is probably material enough to disclose.

Your Q3/Q4 Compliance Audit Checklist

If you are running any AI-generated creative in your campaigns, the time to audit is before the June 9 and August 2 deadlines, not after. If you have AI-generated ads already live without disclosure, pause them and add labels before restarting. No enforcement actions have targeted pre-deadline campaigns yet, but leaving non-compliant creative running past the effective dates creates unnecessary exposure.

1. Creative audit: flag every AI-generated asset across campaigns

Apply the Transparency Trigger from Section 1 to every asset in your library. The question for each one is the same: “Does this creative contain an AI-generated or substantially modified human likeness?” If the answer is yes, that asset needs disclosure. If no, it can proceed without additional labeling.

2. Disclosure audit: ensure on-ad labels + platform metadata for every flagged asset

For every asset you flagged in the creative audit, verify two layers of compliance. The consumer-facing layer means an on-ad label, a text disclosure, badge, icon, or watermark, that is “conspicuous” in the legal sense: prominent, unavoidable, and noticeable, not buried in fine print.

The machine-readable layer means C2PA metadata embedded in the file itself. Check that your AI tool provider has not stripped this metadata during export or format conversion. Screenshots, social media uploads, and file conversions can all remove embedded metadata, so verify the final published version, not just the original export.

3. Operations check: contracts, platform settings, and documentation

Contracts. For every active creator relationship, confirm the agreement covers your current usage (organic, paid, whitelisting), verify geographic scope matches where your ads actually run, and check whether exclusivity terms are still active.

Platform settings. Each platform has its own disclosure mechanism and they need to be checked independently. On Meta, verify the AI disclosure checkbox is selected in Ads Manager. On TikTok, confirm the self-disclosure toggle is on. On YouTube, select the “altered or synthetic content” disclosure in the upload flow.

Audit trail. For each AI-generated asset, log the tool used, the prompt or input, the output, who approved it, and when. Define your pass/fail criteria upfront so your team has clear thresholds rather than case-by-case judgment calls.

Why Human UGC Sidesteps Most of This

Every regulation, framework, and platform policy covered in this guide targets AI-generated content specifically. Real people filmed on camera fall outside every AI disclosure definition, whether it is NY’s “synthetic performer,” the EU’s “deep fake,” or any platform’s labeling policy.

The performance case: attention metrics, authenticity premium, trust data

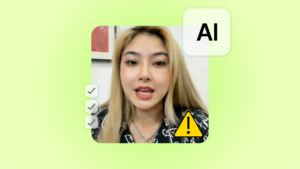

The compliance advantage of human UGC is clear, but the performance case is worth stating as well. In controlled testing, human UGC consistently achieves stronger attention metrics. AI UGC videos typically reaching 85% to 110% of the click-through rate of well-performing traditional UGC. The gap widens in categories where trust and authenticity drive the purchase decision, which includes most DTC verticals.

The consumer trust data reinforces this. The Yahoo and Publicis Media survey found that while proactive AI disclosure helps compared to hidden use, human-created content avoids the trust question entirely. There is no “authenticity penalty” to manage, no perception gap to bridge, and no disclosure label that might lower perceived naturalness or purchase willingness. The content is what it appears to be.

Human UGC vs AI UGC vs Hybrid

Choosing between human creators, AI-generated content, and hybrid approaches used to be a performance and budget question. In 2026, it is also a compliance question. Each format carries a different regulatory footprint, and the right choice depends on where your ads run, what category you operate in, and how much disclosure overhead your team can absorb.

| Human UGC | AI UGC | Hybrid (AI-edited human footage) | |

|---|---|---|---|

| Compliance risk | Lowest. Real people on camera fall outside every synthetic content definition. | Highest. Triggers disclosure in NY, EU, CA, and on all major platforms. | Variable. Depends on whether AI materially alters the human’s likeness or statements. |

| Disclosure required | Standard FTC #ad/#sponsored tags only (same as pre-AI era). | On-ad labels + platform metadata + C2PA where applicable. Every asset needs a compliance check. | Case-by-case. Apply the Transparency Trigger from Section 1. |

| Cost per asset | $150 to $400 per video. | Roughly $3 per video. | Mid-range. Human filming costs plus AI editing/iteration on top. |

| Speed | 5 to 14 days from brief to delivery (creator matching, filming, revision cycles). | Fast. Generate, review, publish. | 7 to 10 days. Human shoot plus AI post-production. |

| Performance | Higher attention metrics. A $100K test across 220 video creatives found human UGC achieves 2.4% CTR vs 1.9% for AI. | Higher ROAS at scale (2.8x vs 2.3x in the same test) because lower production cost allows more testing volume. | Depends on execution. Can capture human authenticity with AI-powered iteration speed. |

Consumer trust | Highest. 98% of consumers say authentic content is pivotal for trust. No “AI label” penalty on perceived naturalness or purchase intent. | Lowest. Labeling an ad as AI-generated lowers perceived naturalness and purchase willingness. | Mixed. Trust depends on how visible the AI modification is and whether the human elements feel authentic. |

Which Approach Fits Which Scenario

The table above gives you the data. Here is how to apply it to common campaign scenarios.

For high-volume creative testing where you need 20 or more variants per concept, AI UGC makes economic sense. The cost advantage is enormous and the iteration speed lets you test hooks, CTAs, and angles that would be prohibitively expensive with human creators. But every single variant needs to pass through a disclosure compliance check before it goes live, so factor that operational cost into your planning. Using human content with ad variations can be another and a safer way to go here.

For hero creatives and brand campaigns where authenticity drives the message, human UGC is the stronger choice. It carries no disclosure burden beyond standard sponsored content tags, and the attention metrics consistently outperform AI alternatives. When consumers see a real person talking about a product, there is no trust penalty to manage.

Some teams are experimenting with a hybrid split: 20% of the creative budget on human UGC hero content and 80% on AI-powered iteration. On paper, that maximizes testing volume. In practice, once you factor in the disclosure compliance overhead on every AI asset, plus the trust penalty data above, the effective cost gap between human and AI creative narrows considerably.

Creator Rights and Licensing: What the AI Wave Changes

Most brands running UGC at scale already understand the basics of creator licensing: scope, duration, exclusivity, geographic rights. What the AI compliance wave changes is one specific area that many existing contracts do not cover: modification rights, specifically whether your brand can use AI tools to alter creator footage after delivery, and what happens to your compliance obligations when it does.

Why your contracts need an AI modification clause

The most common source of UGC disputes is a mismatch between what the contract permits and how the brand actually uses the content. In 2026, the highest-risk version of that mismatch is a brand taking human creator footage, running it through an AI tool that substantially alters the creator’s likeness or voice, and publishing it without disclosure. At that point, the content may cross the Transparency Trigger threshold from Section 1, turning a compliance-safe human UGC asset into one that requires synthetic content disclosure.

Your creator contracts should now explicitly address: whether the brand can use AI tools to modify the footage (trimming and color grading are different from face-swapping or voice-cloning), who is responsible for disclosure if AI modification crosses the materiality threshold, and whether the creator consents to their likeness being used as a training input or reference for AI-generated variations.

How Billo fits into this

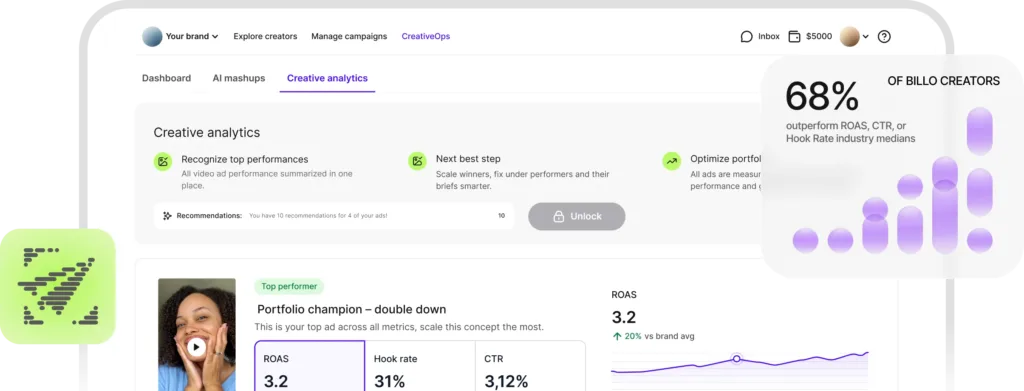

Every AI-generated asset needs a disclosure check, a metadata verification, a platform toggle, and an audit trail entry. For teams running dozens of creatives per month, that compliance overhead adds up fast.

Billo sidesteps most of it by design. Every piece of content on the platform is filmed by a real human creator on camera, which means it passes the Transparency Trigger automatically. No synthetic performer disclosure, no metadata requirements, no platform labeling beyond standard #ad tags. Commercial usage rights are included in every order, covering paid ads, organic, and whitelisting without separate licensing negotiations. The briefing and review workflow is built into the platform, so scope, deliverables, and revision terms are defined upfront.

One note on how Billo uses AI: the platform’s creator matching and brief optimization are powered by AI, but that AI operates on workflow and data analysis, not on the content itself. The videos your creators film are entirely human-made, which is the distinction that matters for every disclosure framework covered in this guide.

Summary & Next Steps

The 2026 regulatory wave is real but manageable. If you take one thing from this AI ad compliance guide, it is one principle: if AI creates or substantially modifies a human likeness in your advertising, disclose it. If it does not, proceed normally.

Three steps to take now:

- Run the Q3/Q4 audit checklist before June 9 (the first major deadline). Flag every AI-generated asset, verify disclosure labels and platform metadata, and confirm your creator contracts cover the usage rights you need.

- Update your compliance documentation after August 2, when both the EU AI Act transparency provisions and California’s metadata mandate take effect. The regulatory landscape will shift again, and your audit trail needs to reflect the current requirements.

- Build the Transparency Trigger into your standard creative workflow. Before any asset goes into production, ask whether AI will generate or substantially modify a human likeness. If yes, plan for disclosure from the start. If no, move forward without it. This single question, asked consistently, eliminates most compliance guesswork.

The simplest compliance path remains the most direct one: use human creators and reserve AI for analysis and minor editing.

FAQs

Do I need to disclose AI in my video ads?

Yes, if AI generates or substantially modifies a human likeness, including faces, voices, and bodies. Standard production uses like color grading, noise removal, background blur, and AI-powered copy suggestions do not trigger disclosure under any current framework.

Does the EU AI Act apply to US brands?

Yes, if your AI-generated content reaches EU audiences. The Act has extraterritorial scope and applies to any company whose AI system output is used in the EU, regardless of where that company is headquartered. Penalties for transparency violations can reach €15 million or 3% of global annual turnover, whichever is higher.

What is a synthetic performer?

Under New York’s Synthetic Performer Disclosure Law, which takes effect June 9, 2026, a synthetic performer is any digital asset created or modified by generative AI that is intended to give the impression of a human performer but is not recognizable as any identifiable real person.

What AI editing doesn’t require disclosure?

Color grading, noise removal, background blur, AI-powered copywriting suggestions, bid optimization, and audience targeting algorithms are all exempt under current frameworks. The line is drawn at “materiality”: whether AI use could mislead consumers about the content’s authenticity or a person’s identity.

SEO Lead

Passionate content and search marketer aiming to bring great products front and center. When not hunched over my keyboard, you will find me in a city running a race, cycling or simply enjoying my life with a book in hand.

Authentic creator videos, powered by real performance data

22,000+ brands use Billo to turn UGC into high-ROAS video ads.

AI Advertising Laws Around the World: What Brand...

Your country-by-country compliance map for AI-generated ad creative in 2026. [...]...

Read full articleThe EU AI Act: What the August 2026 Deadline Mea...

A compliance guide for brands and agencies running AI-generated ad [...]...

Read full articleThe US AI Regulations: What Brands Need to Know ...

A guide for DTC brands, agencies, and performance teams running [...]...

Read full article