The US AI Regulations: What Brands Need to Know Before June 2026

Passionate content and search marketer aiming to bring great products front and center. When not hunched over my keyboard, you will find me in a city running a race, cycling or simply enjoying my life with a book in hand.

A guide for DTC brands, agencies, and performance teams running UGC and paid social in 2026.

If you’re running paid social on Meta, TikTok, or YouTube right now, the legal ground underneath your ad creative is shifting fast. There’s no federal US AI regulations on the books. Instead, brands and agencies face a growing patchwork of state-level rules and federal enforcement actions, each with its own definitions, penalties, and timelines.

New York requires disclosure of AI-generated performers in ads starting June 9. California mandates invisible metadata on all AI-created content by August 2. Tennessee has already made unauthorized AI voice cloning a criminal offense. And the FTC is treating undisclosed AI in marketing as a deceptive practice, with enforcement actions up 40% in 2025 alone.

To make this concrete: if you’re a supplements brand running Meta ads with AI-generated testimonials, you’re now exposed on multiple fronts at once. The FTC’s fake reviews ban, New York’s synthetic performer disclosure, and potentially Tennessee’s voice-likeness rules all apply to different aspects of the same campaign. And they each come with different penalties, different timelines, and different enforcement bodies.

This post breaks down the five jurisdictions that matter most for brands and agencies producing user-generated content (UGC), paid social ads, and performance creative in 2026.

TL;DR

- New York (June 9, 2026): Disclose AI-generated performers in ads. $1,000 – $5,000 per violation.

- Colorado (June 30, 2026): AI ad targeting in regulated categories requires annual impact assessments. Enforcement paused pending DOJ litigation.

- California (August 2, 2026): AI content needs visible labels + embedded metadata. Stripping metadata = license revocation. $5,000/day.

- Tennessee (live since July 2024): AI voice clones without consent are a criminal misdemeanor. Up to 11 months jail + $2,500 fine.

- FTC (ongoing): Undisclosed AI endorsements cost up to $51,744 per violation. Sponsored AI content requires double disclosure.

The safest play: comply with the strictest state standard now. Federal preemption is being debated, but no court has overturned a state AI law yet.

Quick-reference comparison

| Law | What triggers it | Who’s liable | Penalty | Human UGC avoids it? |

|---|---|---|---|---|

| NY Synthetic Performer (Jun 9) | AI-generated person in an ad | Brand, agency, production company | $1K first, $5K subsequent | Yes – real creators on camera aren’t covered |

| CO AI Act (Jun 30) | AI targeting in regulated categories | Deployer (brand using the AI system) | $20K/violation (enforcement paused) | N/A – applies to targeting algorithms, not creative |

| CA SB 942 (Aug 2) | AI content without metadata/labels | Covered AI providers + licensees who strip data | $5K/violation/day | Yes – no metadata requirements for human content |

| TN ELVIS Act (live) | AI voice clone of identifiable person | Creator, brand, platform, tech provider | Criminal: up to 11 mo jail + $2.5K | Yes – real voices carry no likeness risk |

| FTC (ongoing) | Undisclosed AI endorsements, fake reviews | Advertiser | Up to $51,744/violation | Mostly – still need #ad disclosure on paid content |

See how the EU AI act and global AI advertising laws can affect your campaigns

New York: The “Creative Rule” (Effective June 9, 2026)

New York’s Synthetic Performer Disclosure Law is the first US regulation that specifically targets AI-generated people in advertising. Governor Hochul signed it on December 11, 2025 at SAG-AFTRA’s New York office, and it takes effect 180 days later on June 9, 2026.

What the law covers

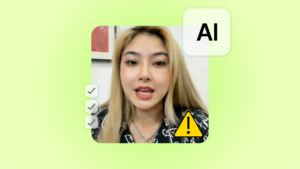

The law defines a “synthetic performer” as any digital asset created or modified by generative AI that is intended to give the impression of a human performer but is not recognizable as any identifiable real person. Think AI avatars, digital spokespeople, and simulated extras in video ads. The definition is broad enough to cover the outputs of most AI UGC ad tools that generate realistic-looking human presenters.

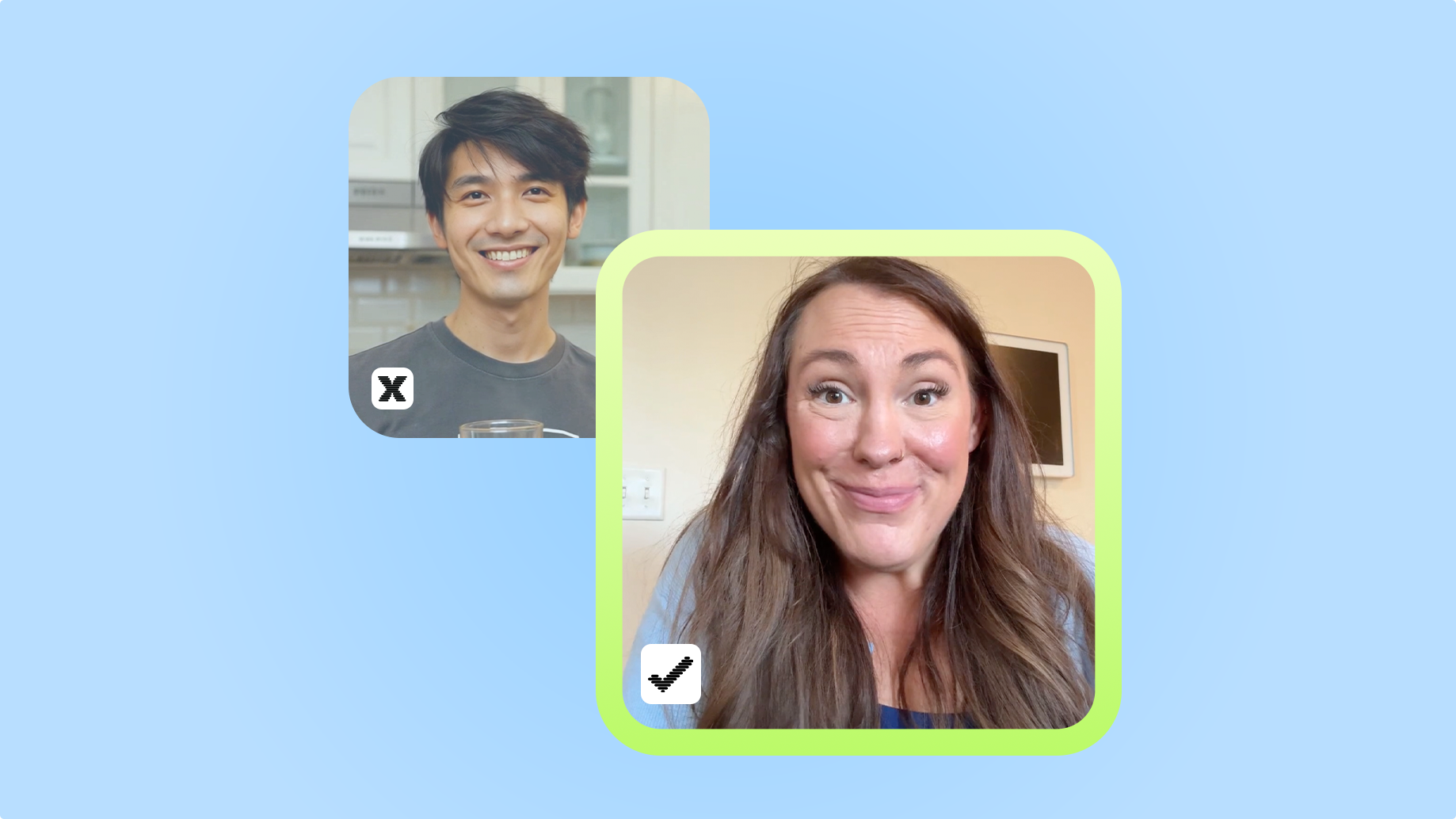

If your ads feature real human creators filmed on camera, this law does not apply to them. It specifically targets AI-generated humans, not AI-assisted editing of real footage.

Who is liable

Liability sits with whoever “produces or creates” the advertisement. That means the brand, the agency, the production company. Platforms and media publishers disseminating the ad are explicitly exempt, so TikTok, Meta, and YouTube will not be held responsible for running your non-compliant ad. You will.

There’s one important qualifier: liability is triggered only by “actual knowledge.” If your brand genuinely didn’t know a synthetic performer was used (say, a production vendor inserted one without telling you), you may not be liable. But relying on that defense is risky. Legal analysts recommend tightening contracts with agencies and AI vendors to require upfront identification of any synthetic performer use, rather than relying on plausible deniability.

That said, the platforms have built their own enforcement layer that runs in parallel. We cover how TikTok, Meta, and YouTube auto-label AI ads in a companion post.

What you need to disclose (and how)

The law requires a “conspicuous” disclosure that a synthetic performer appears in the advertisement. This disclosure must appear in the ad itself, in every medium where it runs. If you run the same ad on Instagram, YouTube, and connected TV, each version needs the disclosure.

Here’s the catch: the law doesn’t specify exact wording, format, or placement. It doesn’t say whether you need on-screen text, a verbal callout, a watermark, or all three. That ambiguity will likely get clarified through enforcement actions and industry practice over the next year. In the meantime, err on the side of making it obvious.

Penalties

First violation: $1,000. Each subsequent violation: $5,000. There is no private cause of action, which means consumers cannot sue you directly. Enforcement comes from state authorities only.

The human UGC angle: Ads featuring real creators filmed on camera are completely unaffected by this law. No disclosure required, no compliance overhead. The rule only kicks in when the “performer” is AI-generated.

Colorado: The “Targeting Rule” for Regulated Categories (Effective June 30, 2026)

Colorado’s AI Act (SB24-205) takes a different angle. Rather than targeting what appears in your ad creative, it governs how AI is used to decide who sees your ads and on what terms. The law covers “High-Risk AI Systems” making “consequential decisions” across eight categories including financial services, housing, insurance, and employment.

If you are a DTC brand in health and beauty, apparel, food and beverage, or software, this section likely does not apply to you. You can skip ahead to the federal preemption section.

If you are in fintech, insurance, real estate, or consumer lending and you use AI algorithms to target ads or influence pricing and approval decisions, you may be classified as a “deployer” of a high-risk system. Deployers must conduct annual impact assessments documenting algorithmic discrimination risks, notify consumers in plain language that AI is being used, and explain how AI factored into any adverse decision. Penalties reach $20,000 per violation per consumer or transaction.

One major caveat: on April 24, 2026, the DOJ intervened in a lawsuit filed by xAI challenging SB 205, and the Colorado Attorney General agreed to halt enforcement pending the litigation. The law has not been struck down, however. If the challenge fails, the June 30 deadline stands and enforcement could resume. Brands in regulated categories should continue preparing rather than treating this as a free pass.

California: The “Technical Rule” (Effective August 2, 2026)

Where New York focuses on what consumers see, California’s approach goes deeper into the technical layer. The AI Transparency Act (SB 942) was signed in September 2024, originally set for January 2026, and delayed to August 2, 2026.

The three requirements

SB 942 imposes three obligations on “Covered Providers,” defined as AI platforms with more than one million monthly users. That includes OpenAI, Midjourney, Adobe Firefly, Runway, and similar tools. The requirements are:

- A free, publicly accessible AI detection tool that lets anyone check whether content was created by the provider’s system.

- A manifest disclosure option: a visible watermark or label that users can choose to include on generated content.

- A mandatory latent disclosure: machine-readable metadata embedded in every piece of generated content, designed to be “extraordinarily difficult to remove.”

The distinction between manifest and latent matters for advertisers. Manifest labels are visible to consumers but can be cropped or removed from screenshots. Latent metadata travels with the file itself and enables platforms like TikTok and Meta to auto-detect AI-generated content even if visible labels have been stripped.

The metadata stripping liability

This is where the law gets teeth for agencies and brands. If a third-party licensee knowingly removes or disables the disclosure capability from a licensed AI system, the covered provider must revoke their license within 96 hours of discovery. The licensee then faces injunctive relief and is responsible for attorney’s fees.

In practical terms: if your creative team or agency downloads AI-generated assets, runs them through editing software that strips metadata, and publishes them as “organic” content in California, you are exposed. The AI provider is required to cut off your access, and you face legal action on top of that.

This is especially relevant for agencies managing creative at volume across multiple brand accounts, where batch editing workflows are more likely to strip provenance data without anyone noticing.

Penalties

Fines run $5,000 per violation per day of non-compliance. Enforcement comes from the California Attorney General, city attorneys, and county counsel. There is no private right of action. But $5,000 per day adds up fast if you are running multiple AI-generated creatives across campaigns.

The human UGC angle: Content filmed by real creators carries no metadata liability. There’s nothing to watermark, nothing to strip, and no provenance trail to maintain. Your creative pipeline stays clean.

Tennessee: The “Voice Rule” (Live Since July 2024)

Tennessee’s ELVIS Act is easy to overlook because it is already in effect and has not yet produced headline-grabbing enforcement actions. But for agencies producing UGC ads with AI-generated voiceovers, it may be the most dangerous law on this list.

What the ELVIS Act does

The Ensuring Likeness Voice and Image Security Act (HB 2091 / SB 2096) was signed on March 21, 2024 and took effect on July 1, 2024. It passed unanimously (93-0 in the House, 30-0 in the Senate) and was the first US state law to explicitly protect voice from AI cloning.

Before this law, Tennessee’s 1984 right of publicity statute only covered name, photograph, and likeness. The ELVIS Act adds “voice” as a protected category, defining it as “a sound in a medium that is readily identifiable and attributable to a particular individual, regardless of whether the sound contains the actual voice or a simulation of the voice.”

That last part is key. It does not matter whether the AI actually used the person’s voice recordings. If the output sounds like them, you are in scope.

Criminal liability, not just civil

Most right-of-publicity laws in the US only provide civil remedies. Tennessee went further. Unauthorized AI voice cloning under the ELVIS Act is a Class A misdemeanor, carrying up to 11 months and 29 days of imprisonment plus a $2,500 fine.

The law also creates a novel liability category for technology providers. Anyone who distributes an algorithm, software, tool, or other technology” whose “primary purpose or function” is creating unauthorized voice or likeness facsimiles faces direct liability. This could potentially reach the AI voice cloning platforms themselves, not just the people using them.

The agency risk with UGC

Here is the scenario that should concern brands: a UGC creator submits a video using an AI voice filter that sounds like a recognizable artist, influencer, or public figure. The brand approves it, runs it as a paid ad, and the rights holder’s team notices. Under the ELVIS Act, the brand is liable as a publisher if it “knew or reasonably should have known” the voice use was unauthorized. If the creator is a signed recording artist, the record label can also file suit.

No enforcement actions have been filed yet under the ELVIS Act, but legal analysts expect that to change as AI voice tools become more widespread. The practical advice: audit all AI voiceovers for likeness risk, require creators to declare any AI voice tools in their briefs, and get explicit consent when voice output could resemble an identifiable person.

The human UGC angle: When a real creator speaks in their own voice, there’s no likeness claim to worry about. The ELVIS Act targets AI simulations of identifiable voices, not real people talking on camera.

The FTC: The “Federal Floor” (Ongoing Enforcement)

While states are writing new laws, the FTC is using the authority it already has. Section 5 of the FTC Act prohibits unfair or deceptive practices, and the Commission has been applying that standard aggressively to AI claims in advertising since 2024.

The AI-washing sweep

The FTC brought at least a dozen “AI-washing” enforcement cases in 2025, targeting companies that overstated what their AI products could actually do. The most notable recent action: the Air AI settlement in March 2026, which resulted in an $18 million judgment (largely suspended due to inability to pay) and a permanent ban on the operators marketing business opportunities.

These cases are not just about AI companies. They set a clear precedent: if you claim your product uses AI in ways it does not, or if you overstate AI capabilities in your marketing, the FTC will treat that as deceptive advertising.

Fake reviews and the double disclosure

In August 2024, the FTC finalized a rule banning fake AI-generated reviews and testimonials “by someone who does not exist.” That rule has been enforceable since October 2024. If your brand is using AI to generate customer testimonials, product reviews, or endorsement content that implies a real person had a real experience, you are in violation.

Separately, the FTC now expects double disclosure on sponsored AI content. If a piece of content is both paid and AI-generated, both facts must be disclosed separately. In video, both disclosures should appear in the first three to five seconds as on-screen text. One label does not cover both. In practice, this means a TikTok Spark Ad where the creator used AI tools for voiceover or visual effects needs both “#ad” and an AI disclosure visible at the start of the video, not buried in the caption.

Penalty scale

The numbers are significant. Undisclosed synthetic endorsements can now cost up to $51,744 per violation (2026 amount). Growth Cave settled with the FTC for $48.6 million over misleading AI claims. accessiBe paid $1 million. The FTC does not need new legislation to pursue these cases. Section 5 is broad enough to cover deceptive AI practices across every marketing channel.

The human UGC angle: Real testimonials from real customers don’t trigger the fake reviews ban, and organic creator content doesn’t need the AI-generation disclosure layer. You still need to disclose the paid partnership, but that’s one label instead of two.

The Federal Preemption Wild Card

Running underneath every state rule above is a federal push to make them irrelevant. On December 11, 2025, the White House issued an executive order establishing a “minimally burdensome national policy framework” for AI. It created a DOJ AI Litigation Task Force with a mandate to challenge state AI laws as unconstitutional under the Commerce Clause or First Amendment, directed the FTC to clarify when state laws are preempted, and threatened to restrict federal broadband funding to states with “onerous” AI legislation.

That task force made its first move on April 24, 2026, joining xAI’s lawsuit against Colorado’s SB 205. On the legislative side, the TRUMP AMERICA AI Act (a March 2026 discussion draft from Senator Blackburn) is the most comprehensive federal AI bill proposed so far, but it explicitly preserves “generally applicable” state regulations and does not deliver blanket preemption. Congress has rejected broad preemption language in prior bills.

Here is what matters for your planning: an executive order cannot unilaterally preempt state law. Only Congress or the courts can, and neither has. No court has ruled any state AI law unconstitutional, and the expected timeline for resolution is two to four years. The practical advice from legal experts is consistent: build your compliance program around the strictest applicable state requirements. The rules you follow today could be overruled by 2027 or 2028, but they have not been overruled yet, and betting on that outcome is a compliance risk, not a compliance strategy.

What To Do Next

The action items across these five jurisdictions converge into a few priorities:

- Audit your current campaigns for synthetic performers and AI voice use,

- Make sure your creative pipeline preserves metadata rather than stripping it,

- Update creator briefs with AI disclosure clauses,

- Implement double disclosure on any sponsored content that involves AI.

Each of those steps involves real workflow decisions, from how you structure creator contracts to how you handle metadata on export. Here is a full compliance guide that walks through those decisions in detail, including a framework for which types of AI use trigger disclosure and which do not, a decision table comparing human UGC, AI UGC, and hybrid approaches by compliance risk and cost, and a step-by-step audit checklist for your Q3 and Q4 campaigns.

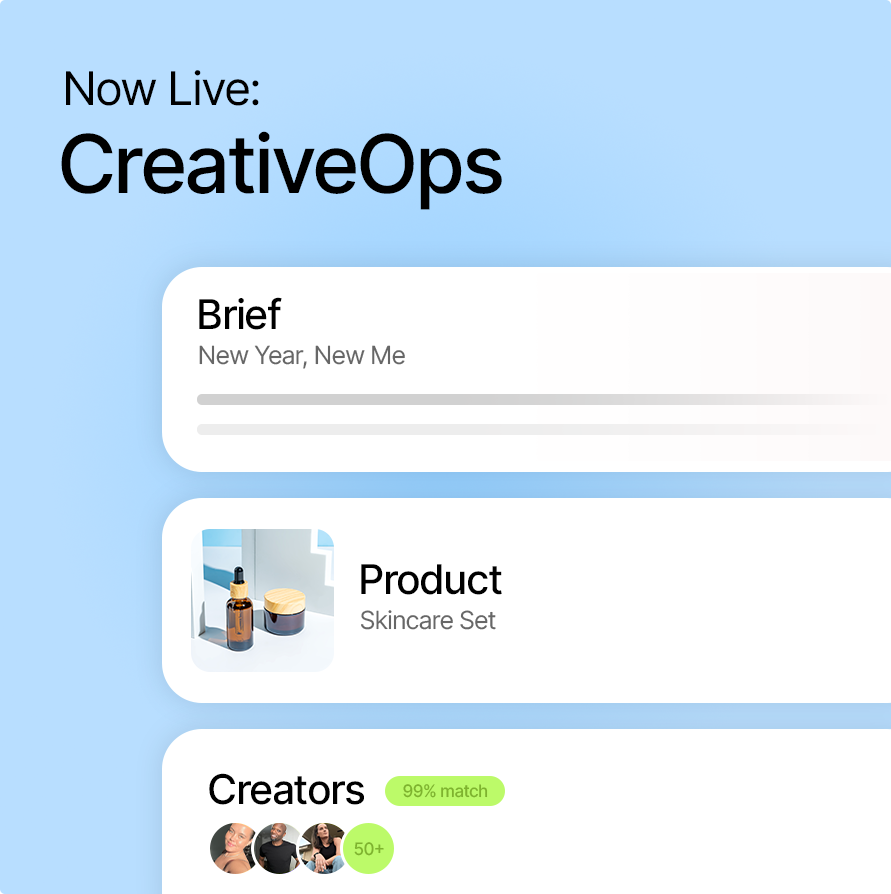

Alternatively, here is how human-creator pipeline works in practice. Billo’s creative operations workflow handles briefing, creator matching, rights, and content delivery in one place, with none of the disclosure, metadata, or voice-likeness risks that come with AI-generated creative.

Summary and next steps

The US AI advertising landscape in 2026 is defined by a state-by-state patchwork plus accelerating federal enforcement. There is no single rule to follow, but there is a clear hierarchy of urgency:

New York’s synthetic performer law hits first on June 9, requiring visible disclosure in any ad featuring AI-generated humans. Colorado’s algorithmic discrimination requirements are set for June 30, though enforcement is paused pending DOJ litigation. California’s technical metadata rules take effect August 2, adding invisible provenance requirements on top of visible labeling. Tennessee’s voice protection law is already live and carries criminal penalties. And the FTC is pursuing AI-related deception under existing authority, with penalties climbing past $51,000 per violation.

The federal government is actively trying to preempt these state laws through litigation and executive action, but no court has struck any of them down. The expert consensus is to comply with the strictest applicable standard today and build enough flexibility to adjust when the federal picture clarifies.

For brands and agencies running UGC ads at volume, one pattern is becoming hard to ignore: as disclosure requirements, metadata mandates, and liability risks stack up around AI-generated content, working with authentic human creators is increasingly the path of least resistance. Real creators filmed on camera do not trigger synthetic performer disclosure laws, do not require embedded provenance metadata, and do not carry voice-likeness liability. The compliance overhead for human UGC is simply lower, and that gap widens with every new regulation that takes effect.

FAQs

Does the NY law apply if my brand is based outside New York?

Yes. The law applies to any advertisement containing a synthetic performer that runs in New York, regardless of where the brand is headquartered. If your social media ads target NY audiences or run on platforms accessible in the state, you are in scope.

What is the difference between NY’s disclosure rule and California’s metadata rule?

New York requires a visible, “conspicuous” disclosure to consumers within the ad itself. California’s SB 942 goes further by also requiring invisible, machine-readable metadata (a “latent disclosure”) embedded in the content file. New York is about what consumers see. California is about what platforms and detection tools can read, even if the visible label has been removed.

Can I face criminal charges for using AI in ads?

In Tennessee, yes. The ELVIS Act makes unauthorized AI voice cloning a Class A misdemeanor, carrying up to 11 months and 29 days of imprisonment plus a $2,500 fine. This applies specifically to voice: if your ad uses an AI-generated voice that is identifiable as a real person and you do not have their consent, criminal liability is on the table. Other states on this list impose only civil penalties.

Should I wait for a federal standard before complying with state laws?

No. State laws are fully enforceable right now. The Trump administration’s executive order signals intent to preempt state AI regulation, but an executive order cannot override state law on its own. Only Congress or the courts can do that, and neither has acted yet. If you wait and the state law survives its legal challenge, you are liable for every violation during the period you chose not to comply. The safest path is to build for the strictest current standard and adjust later.

SEO Lead

Passionate content and search marketer aiming to bring great products front and center. When not hunched over my keyboard, you will find me in a city running a race, cycling or simply enjoying my life with a book in hand.

Authentic creator videos, powered by real performance data

22,000+ brands use Billo to turn UGC into high-ROAS video ads.

Platform AI Labeling in 2026: How C2PA, TikTok, ...

AI labeling is no longer a creator setting. It’s an [...]...

Read full articleUGC AI Compliance Guide: Prepare Your Ad Creativ...

Three regulatory deadlines are converging in summer 2026, and they [...]...

Read full articleAI Advertising Laws Around the World: What Brand...

Your country-by-country compliance map for AI-generated ad creative in 2026. [...]...

Read full article